You now have the ability to query all the logs, without the need to set up any infrastructure or ETL. Run a simple query: SELECT * FROM elb_logs_raw_native WHERE elb_response_code = '200' LIMIT 100 Therefore, when you add more data under the prefix, e.g., a new month’s data, the table automatically grows. Note that table elb_logs_raw_native points towards the prefix s3://athena-examples/elb/raw/. You created a table on the data stored in Amazon S3 and you are now ready to query the data. You can interact with the catalog using DDL queries or through the console. It’s highly durable and requires no management.

Athena has an internal data catalog used to store information about the tables, databases, and partitions. After the statement succeeds, the table and the schema appears in the data catalog (left pane). A regular expression is not required if you are processing CSV, TSV or JSON formats.

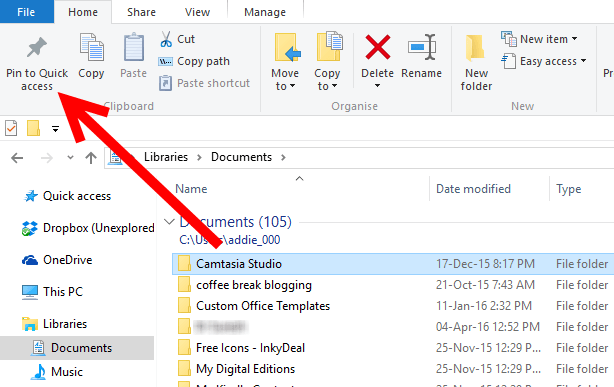

You can also use Athena to query other data formats, such as JSON. You can specify any regular expression, which tells Athena how to interpret each row of the text. Note the regular expression specified in the CREATE TABLE statement. LOCATION 's3://athena-examples/elb/raw/' Copy and paste the following DDL statement in the Athena query editor to create a table.ĬREATE EXTERNAL TABLE IF NOT EXISTS elb_logs_raw_native ( You can create tables by writing the DDL statement on the query editor, or by using the wizard or JDBC driver. If you are familiar with Apache Hive, you may find creating tables on Athena to be familiar. There is a separate prefix for year, month, and date, with 2570 objects and 1 TB of data. Top 10 Performance Tuning Tips for Amazon Athenaįor this example, the raw logs are stored on Amazon S3 in the following format.Since you’re reading this blog post, you may also be interested in the following: We show you how to create a table, partition the data in a format used by Athena, convert it to Parquet, and compare query performance. In this post, we demonstrate how to use Athena on logs from Elastic Load Balancers, generated as text files in a pre-defined format. For more information, see Athena pricing. You can save on costs and get better performance if you partition the data, compress data, or convert it to columnar formats such as Apache Parquet. This eliminates the need for any data loading or ETL.Īthena charges you by the amount of data scanned per query. Athena uses an approach known as schema-on-read, which allows you to project your schema on to your data at the time you execute a query. You can also use complex joins, window functions and complex datatypes on Athena.

You can write Hive-compliant DDL statements and ANSI SQL statements in the Athena query editor. It also uses Apache Hive to create, drop, and alter tables and partitions. Athena works directly with data stored in S3.Īthena uses Presto, a distributed SQL engine to run queries. You don’t even need to load your data into Athena, or have complex ETL processes. Athena is serverless, so there is no infrastructure to set up or manage and you can start analyzing your data immediately. May 2022: This post was reviewed for accuracy.Īmazon Athena is an interactive query service that makes it easy to analyze data directly from Amazon S3 using standard SQL.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed